Key Components of a Professional Research Methodology

For the last two decades Evans Data has been delivering the most comprehensive worldwide developer surveys available. They are not only in-depth, but also accurate and unbiased. All our research conforms to established principles endorsed by the Marketing Research Association, ESOMAR and CASRO. Here are some of the key components to keep in mind when designing a reliable quantitative research study.

Critical Methodology Points

When evaluating the quality of sample data, the following criteria should be used:

- The sample source must be unbiased

- Sample pool cannot include lists from specific vendors

- Sample pool cannot be recruited from sources that are oriented towards ONE technology

- A pure sample should be used and not use weighting to try to "fix" bias problem

- No branded incentives can be used

Evans Data Methodology Rules

- All samples are unbiased

- No vendor lists have EVER been used in our panel recruitment

- No platform oriented or language oriented lists have EVER been used

- No branded incentives

- No weighting is applied to the survey samples. Evans Data only uses PURE samples, not weighted

- Accuracy is always (+/-) 5% or better for all reports

- Global surveys are 2.5% or better

With more than 20 years of developer-centric focus and with an increasing emphasis on IT pros, Evans Data and our analysts have a heritage of developer focus that no one else can match. We also have a rich store of data generated over the years which we can consult to enhance our analysis as well as literally thousands of data points that we generate each year. No other company is so steeped in developer data and understanding. We are the experts.

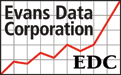

Accuracy of Results : Margin of Error

The global population of software developers is nearly 24 million so we couldn’t possibly survey every developer. The Margin of Error describes how close we can reasonably expect that the survey results of the sample compare to the true population of developers. The Margin of Error decreases as samples get larger BUT there are diminishing returns after about 1500 respondents.

Here are some standard sample sizes and MOEs:

- Margin of Error at 384 = (+/-) 5% (industry standard)

- Margin of Error at 600 = (+/-) 4%

- Margin of Error at 1000 = (+/-) 3%

The difference between...

- 400 and 1000 = (4.9-3.1) = 1.8%

- 600 and 1000 = (4.0-3.1) = 0.9%

- 1000 and 2000 = (3.1-2.9) = 0.2%

Because survey prices can rise considerably with additional respondents, adding sample after 1500 is not usually worthwhile. The difference between 2000 respondents and 20,000 is less than 1.5%.

Sample size should be accurate to (+/-) 5%, BUT an unbiased sample is MUCH MORE IMPORTANT – biased samples can’t be projected on to the larger universe of developers!

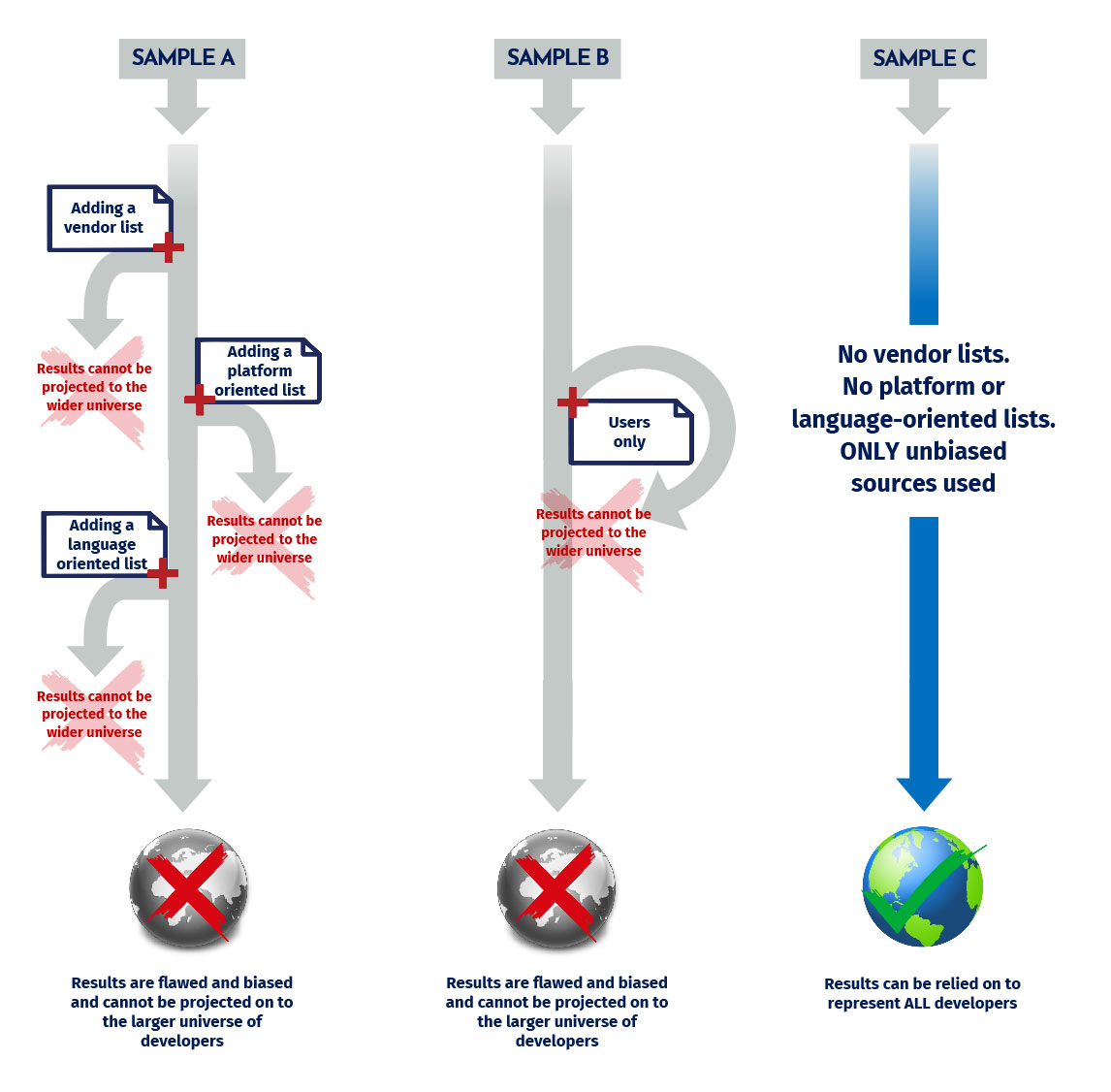

Most Crucial Factor : Sample Quality

Only an unbiased sample can be projected on to the rest of the universe. The sample will be biased if sources are used that are related to a specific vendor or technology. Incentives MUST be unbiased too, no branded products.

The bottom line is that only an unbiased sample can be projected on to the rest of the universe. The sample will be biased if sources are used that are related to a specific vendor technology.

User Surveys Are Subject to Bias

User surveys can help you to explore your user’s tendencies. They can shine a light on your users’ demographics, tech use and satisfaction.

- User surveys should be BLIND – users will be more honest and objective with a third party

- User surveys can help private vendors measure satisfaction

- User surveys are best utilized by media and communities to profile their audience for advertisers

- User surveys ONLY show tendencies of your users – they can’t be projected on to the rest of the developer universe.

If your sample isn’t representative, it will be subject to bias. Keep in mind that with user surveys, certain groups may be over-represented, and their opinions magnified while others may be under-represented.

Neutral Incentives Best Practices

- Incentives to participate cannot be associated with any technology or the survey respondents may be biased towards users of that technology

- Cash, information or points leading to cash gift cards are acceptable neutral incentives

- Blind surveys that do not identify the sponsors are needed for unbiased results

Weighting Can Be a Slippery Slope

Weighting describes a practice where a market research company assigns a higher value to a particular set of respondents than others. In some cases this has a legitimate purpose – usually to test a particular hypothesis. However, it’s bad practice to use weighting to cover sample biases.

The best samples are PURE and not weighted. Weighting should never be used to cover biased sample collection practices, such as using a vendor list.

During the years that Evans Data has been recruiting developers to participate in surveys, we do so from sources that are as unbiased as possible. Consequently, although we have used more than 100 different individual sources for recruiting, the following principles are strictly adhered to and consistently applied:

- No vendor lists have ever been used in EDC subscription surveys and none have ever been added to the panel

- No platform-specific lists have ever been used in any EDC general subscription surveys and none have ever been added to the panel

- No language-specific lists have ever been used in any EDC subscriptions surveys and none have ever been added to the panel

In this way we provide the most eclectic and unbiased sample available anywhere. With thousands of developers chosen in a deliberately unbiased way from a wide variety of neutral lists, our data truly provides in-depth looks at representative samples of the developer population.